If the idea of soundstage is "sounds larger", then it's something achievable with EQ. I sold my HD800 years ago after getting better at PEQing my headphones.

If it is "even with stereo recording with headphones, I can hear male vocalist n°3 30° behind me to my right", then it's IMO just the lucky combination of the effects applied to that vocalist in the mix (EQ, reverb, etc.) + FR at your drum happening to coincide with your own HRTF, and the results may not be constant from recording to recording, or even from individual elements in a recording to individual elements since these effects are "baked in". Change the EQ and you may loose the impression that "male vocalist n°3" was behind you to the right, turning it into an undefined source, but gain the impression that "female vocalist n°2" is now 20° to your left in front of you (if you're lucky). That's basically been my life with headphones and stereo recordings. Some individual elements of a track may jump at me in a very spatially defined way, but most don't, and EQing headphones shifts that around.

With the advent of object-based recording systems (or game engines) I'm now rather looking into what sort of FR at my own drum would be most convincing with generic HRTF profiles (if it is possible, which I'm not convinced)... but since they will vary depending on the implementation I'm not sure that a single curve is desirable. Right now I'm mostly focusing on Apple's interpretation of object based recordings (Spatial Audio).

Honestly I can't wait to get object based formats + individualised HRTFs + headphones that can deliver an

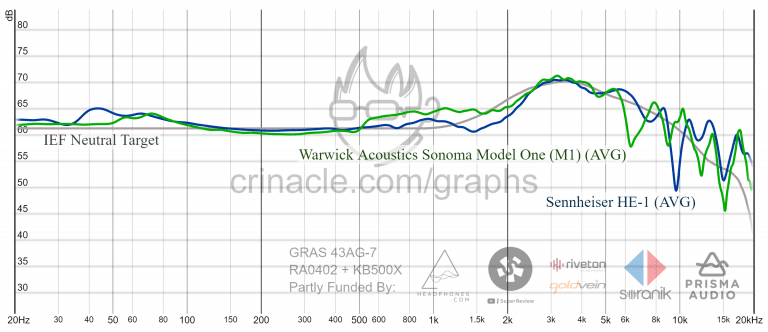

exact FR at your drum even above 1kHz (big problem right now) to finally get truly convincing surround sound simulation and proper "soundstage"

. Maybe that's the only truly convincing way to make sure that both "male vocalist n°3" and "female vocalist n°2" are both very specifically spatially defined at all times for

all of us

.